Businesses heavily rely on the data they receive in their interactions with the market, other companies, and users. From tailoring prices to predicting demands, needs, trends, and more, data assists decision-making in multiple ways. So, using big data in 2025 is mandatory for companies of all sizes. This is where big data collection companies play a crucial role, providing businesses with the valuable insights they need to thrive in today’s data-driven world.

However, while over 97% of businesses worldwide have invested in big data, only 24% of these companies use the collected data to analyze and make informed decisions. Considering that data created, captured, copied, and consumed worldwide is expected to reach 181 zettabytes in 2025, a lot of valuable information is left unused.

In this post, you’ll discover how to collect big data and learn what tools and techniques can be used for data collection. Besides, you’ll review several real-life cases by Intelliarts where we show the use of big data in businesses and the value of such interventions.

How is big data collected?

“Business data is an endless source of valuable insights. And we are to help companies extract the most from it through technology“

Volodymyr Mudryi, data scientist and AI/ML engineer

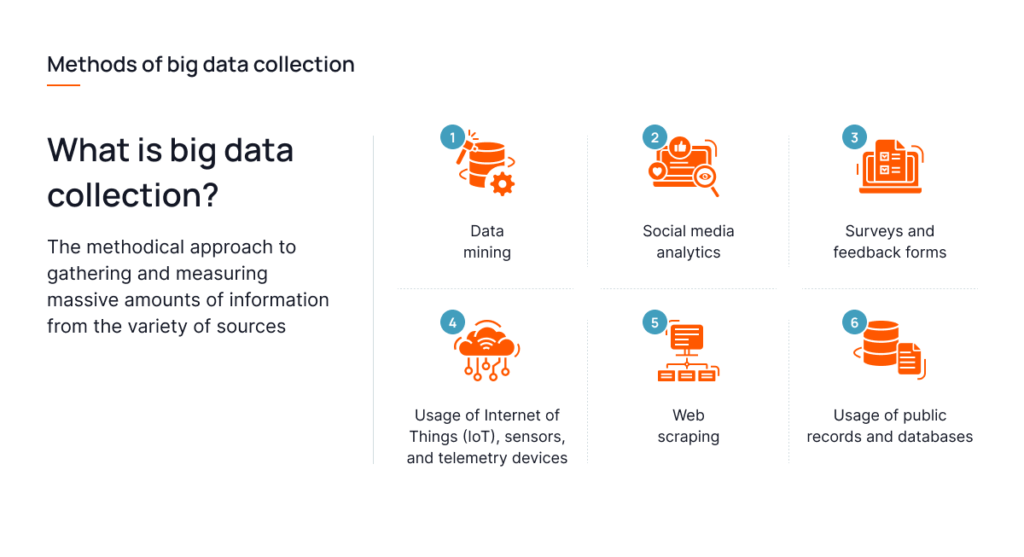

From a certain point of view, methods of big data collection can be referred to as sources of big data collection, as they essentially refer to pools from which data comes. So, here are the top 6 methods of how big data to collect or choose data that are used by businesses across numerous industries:

#1 Data mining

Data mining involves extracting valuable information from large datasets to identify patterns, correlations, and trends for decision-making. It utilizes statistical, machine learning, and artificial intelligence techniques to analyze data and generate insights that can inform business strategies, improve customer experiences, and predict future trends. To optimize web crawling efficiency, it’s important to consider factors like control over crawling speed and handling anti-bot measures, which can influence the decision on choosing the right crawler tool.

Data types that can be obtained:

- Structured data: Spreadsheets, databases

- Unstructured data: Text, images, audio

- Semi-structured data: XML, JSON

- Time-series data: Stock prices, sensor readings

- Spatial data: Geographic information systems data

#2 Social media analytics

Social media analytics involves analyzing data from social media platforms to understand user behavior, sentiments, and trends. It provides insights into audience preferences, content engagement, and social media performance, helping businesses tailor their marketing strategies, monitor brand reputation, and identify influencers for TikTok, Instagram, and other social media platforms.

Data types that can be obtained:

- Text: Posts, comments, tweets

- Unstructured data: Images and videos

- User interactions: Likes, shares, follows

- Demographic data: Age, gender, location

- Sentiment data: Positive, negative, neutral sentiments

#3 Surveys and feedback forms

Surveys and feedback forms are tools used to collect direct input from individuals on various topics, such as customer satisfaction, product feedback, or employee engagement. They provide structured data that can be analyzed to understand preferences, opinions, and experiences.

Data types that can be obtained:

- Quantitative data: Ratings, scores, numerical responses

- Qualitative data: Open-ended text responses

- Demographic data: Age, gender, occupation

- Psychographic data: Interests, values, lifestyle

#4 Usage of Internet of Things (IoT), sensors, and telemetry devices

The Internet of Things (IoT), sensors, and telemetry devices are used to collect real-time data from various sources, such as appliances, vehicles, industrial equipment, and environmental monitors. This data is valuable for predictive maintenance for manufacturing, energy management, environmental monitoring, and improving operational efficiency.

Read also: Renewable Energy Challenges

Data types that can be obtained:

- Sensor data: Temperature, humidity, pressure

- Telemetry data: Location, speed, acceleration

- Operational data: Machine status, energy consumption

- Environmental data: Air quality, soil moisture

#5 Web scraping

Web scraping is a technique used to extract data from websites. It involves programmatically accessing web pages and extracting relevant information, such as product prices, stock quotes, news articles, and social media posts. Web scraping is commonly used for market research, competitive analysis, price monitoring, and sentiment analysis.

Data types that can be obtained:

- Text data: Articles, product descriptions

- Numerical data: Prices, statistics

- Metadata: Page titles, keywords

- Structured data: Tables, lists

Read also: Ethics of Data Collection

#6 Usage of public records and databases

Public records and databases are sources of structured data maintained by government agencies, public institutions, and private organizations. These records include demographic data, economic indicators, health statistics, legal documents, and geographic information.

Data types that can be obtained:

- Demographic data: Census data, population statistics

- Economic data: Employment rates, GDP figures

- Health data: Disease prevalence, healthcare outcomes

- Legal records: Court cases, property deeds

- Geographic data: Maps, spatial datasets

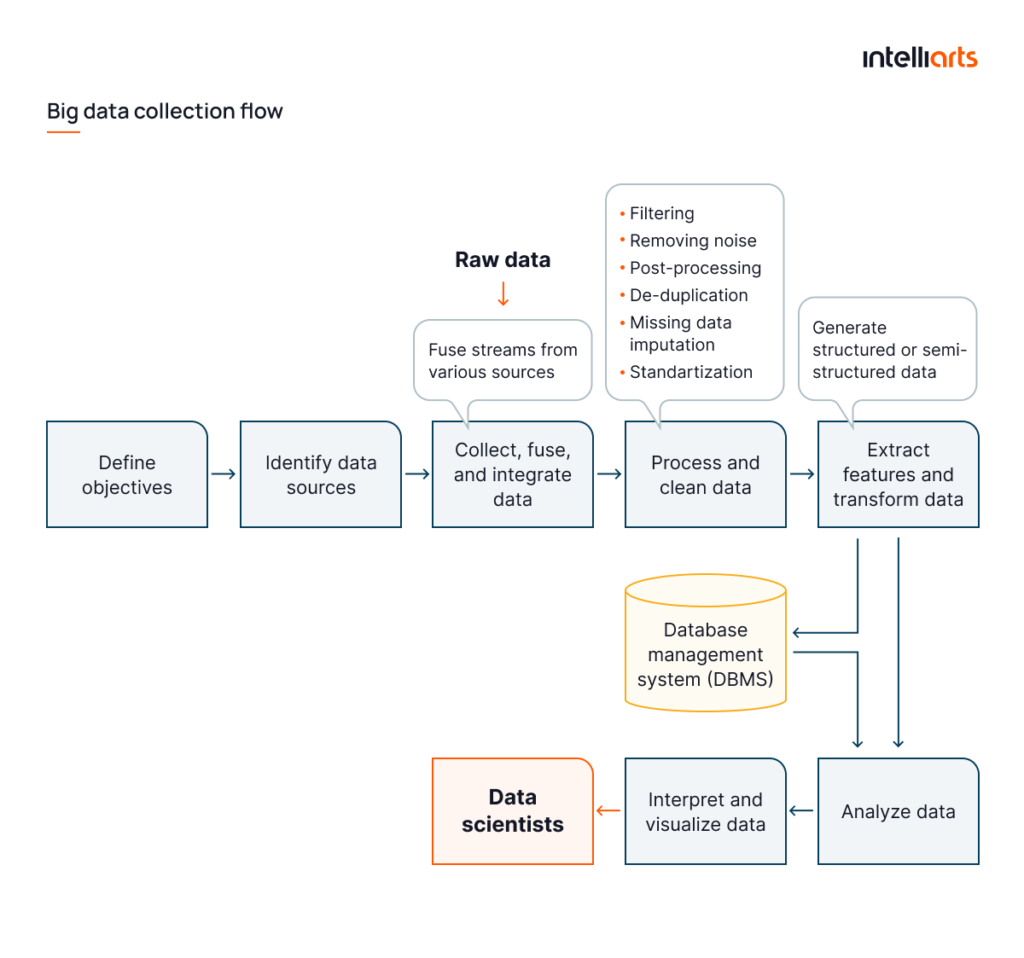

While the sources and data types in the methods detailed differ, they share a pretty similar implementation procedure. Learn how you can implement any of these six methods of collecting big data in the infographic below:

Looking to implement a big data collection system? Drop Intelliarts a line, and let our expert data engineers contribute to your best project.

Tools and techniques for big data collection

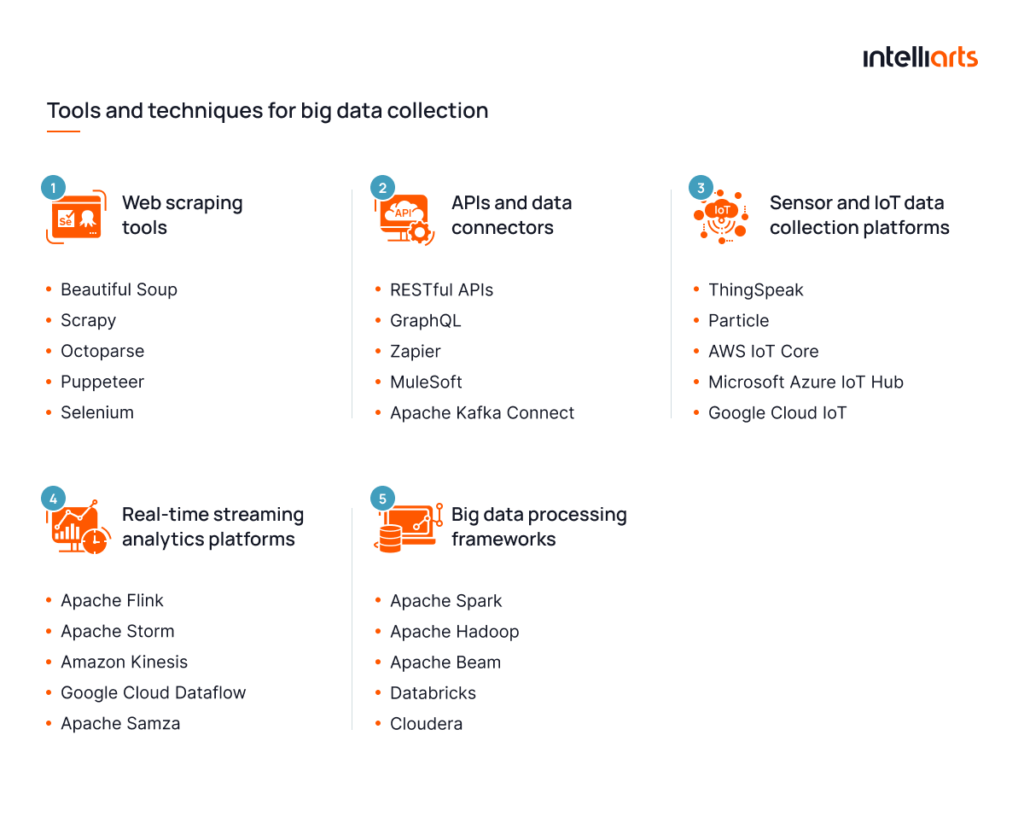

Deriving from Intelliarts expertise with big data, here is the review of tools and techniques that enable obtaining raw data within the scope of one or another method of collecting big data, detailed previously:

#1 Web scraping tools

Web scraping tools are software applications designed to extract data from websites. They automate the process of accessing web pages, navigating through them, and extracting relevant information, such as text, images, and links. Web scraping tools are used for data collection, market research, and competitive analysis.

Examples of web scraping tools:

- Beautiful Soup

- Scrapy

- Octoparse

- Puppeteer

- Selenium

For SEO-focused data gathering, tools like SE Ranking SEO API can be used to retrieve keyword rankings, backlink profiles, and other performance metrics to enrich your big data strategy.

#2 APIs and data connectors

Application Programming Interfaces (APIs) and data connectors are interfaces and tools that allow for seamless integration and data exchange between different software applications, databases, and platforms. They enable the retrieval, updating, and sharing of data across various systems, facilitating interoperability and data integration.

Examples of APIs and data connectors:

- RESTful APIs

- GraphQL

- Zapier

- MuleSoft

- Apache Kafka Connect

#3 Sensor and IoT data collection platforms

Sensor and IoT data collection platforms are systems designed to collect, manage, and analyze data from sensors and Internet of Things (IoT) devices. These platforms enable real-time monitoring, data aggregation, and analysis of sensor-generated data for applications in smart cities, healthcare, agriculture, and industrial automation.

Examples of IoT data collection platforms:

- ThingSpeak

- Particle

- AWS IoT Core

- Microsoft Azure IoT Hub

- Google Cloud IoT

#4 Real-time streaming analytics platforms

Real-time streaming analytics platforms are software solutions that process and analyze data streams in real-time. They are used to monitor and respond to events as they occur, enabling immediate insights and actions based on live data for applications like fraud detection, social media analysis, and real-time dashboards.

Examples of real-time streaming analytics platforms:

- Apache Flink

- Apache Storm

- Amazon Kinesis

- Google Cloud Dataflow

- Apache Samza

#5 Big data processing frameworks

Big data processing frameworks are software platforms designed to handle the processing and analysis of large datasets. They provide the necessary infrastructure for distributed computing, allowing for scalable and efficient handling of big data. These frameworks support a variety of real-time big data processing tasks, including batch processing, stream processing, and machine learning.

Examples of big data processing frameworks:

- Apache Spark

- Apache Hadoop

- Apache Beam

- Databricks

- Cloudera

As you can observe, some of the mentioned tools can serve as more than one big data collection instrument. With them, it’s often possible to establish a relatively simple yet capable system for big data retrieval, especially when the variety of big data types required to be obtained is limited.

Establishing a fully-fledged system for data retrieval requires substantial data science expertise and experience. Drop the Intelliarts team a line and let our specialists assist you.

You can discover about data collection additionally from the video below:

Best big data collection practices for business owners

Creating a seamless process for gathering data is half the battle. Yet, there is more to consider when you intend to make use of the vast data you generate in your business operations or intend to collect it from external sources. Here are the best practices that the Intelliarts team of AI and ML engineers sticks to and advises business owners to adopt:

#1 Data source diversification

This refers to expanding the variety of data sources to capture a more comprehensive and nuanced view of the business landscape. It reduces the reliance on a single source, thereby minimizing biases and enhancing the robustness of data-driven insights.

Measures to consider:

- Define key data needs

- Source from diverse channels

- Integrate and standardize data

- Regularly update sources

#2 Implementing real-time data analysis

One of the key benefits of real-time data analysis in businesses is that it reacts promptly to emerging trends and issues, providing a competitive edge by enabling immediate decision-making and action.

Measures to consider:

- Establish data streaming

- Deploy real-time analytics

- Set up alerts and triggers

- Ensure system scalability

#3 Building a data-driven culture

Creating an environment where data is at the forefront of decision-making processes encourages informed decisions, fosters innovation, and enhances operational efficiency.

Measures to consider:

- Enhance data literacy

- Promote data-driven decisions

- Align data and business goals

- Reward data achievements

#4 Leveraging predictive analytics

Predictive analytics enables businesses to anticipate future trends and customer behaviors, facilitating proactive strategies and optimizing outcomes.

Measures to consider:

- Gather relevant historical data

- Select and develop models

- Train and validate models

- Implement and refine models

#5 Customizing data collection strategies

Tailoring data collection to specific business objectives ensures that the data gathered is relevant, actionable, and aligned with the company’s goals.

Measures to consider:

- Set clear collection objectives

- Choose suitable methods and tools

- Design and implement processes

- Regularly review and adjust

#6 Utilizing data for enhanced customer experience

By analyzing customer data, businesses can personalize interactions and services, leading to increased satisfaction, loyalty, and ultimately, revenue growth.

Measures to consider:

- Analyze customer interactions

- Identify personalization insights

- Implement tailored strategies

- Measure and optimize experiences

#7 Ensuring data security and privacy

Protecting data integrity and maintaining privacy are critical for building trust with customers and complying with legal regulations, thereby safeguarding the business’s reputation and operations.

Measures to consider:

- Conduct security audits

- Implement protective measures

- Comply with regulations

- Train staff on best practices

#8 Continuous improvement and adaptation

Staying agile and continuously refining data practices ensures that businesses remain competitive and responsive to changing market dynamics and technological advancements.

Measures to consider:

- Monitor and evaluate strategies

- Stay informed about trends

- Foster innovation

- Implement feedback loops

Discover how Intelliarts built a big data pipeline from another of our blog posts.

Success stories by Intelliarts

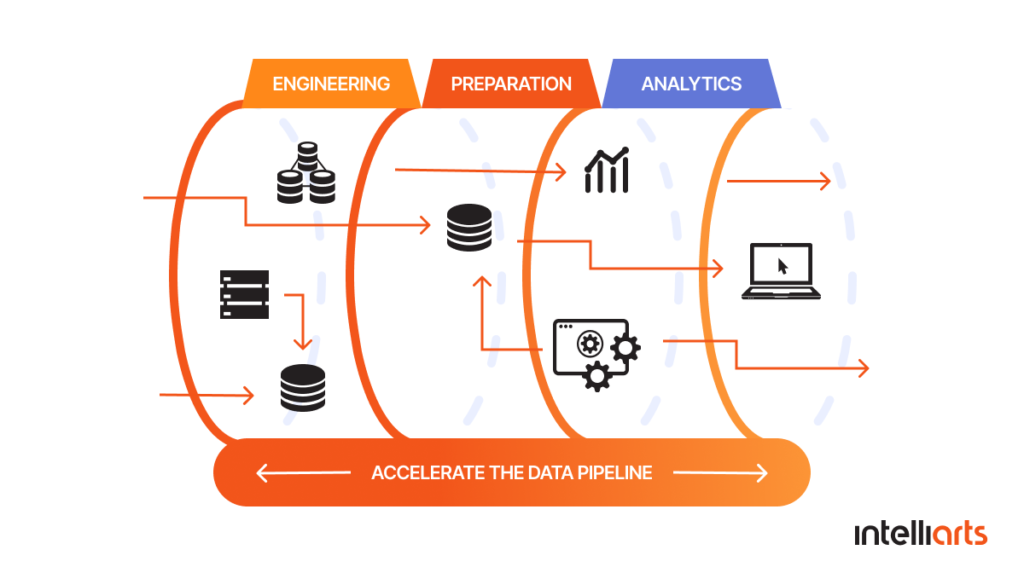

Here at Intelliarts, we have substantial experience with big data trends that go beyond data collection. We have created end-to-end data pipelines and fully-fledged ML solutions that help make use of this data in business. Here are short summaries of some of our big data collection examples:

DDMR data pipeline case study

The challenge was to streamline data acquisition from Firefox and enhance the efficiency of handling extensive clickstream data. Since the customer’s sales department relies upon effective management of big data, proper execution of the project was critical.

The solution was the creation of an end-to-end data pipeline, which encompasses data collection, storage, processing big data, and delivery. We made it possible to process hundreds of terabytes of data per day, helping the customer to grow to operate clusters with 2000 cores and 3700 GB of RAM.

Now, the customer has the ability to process large volumes of data and translate them into actionable insights. It enhanced the reliability of sales processes and reduced human error, resulting in annual revenue multiplied a few times and an increased base of loyal customers.

Discover more about the DDMR data pipeline case study.

Processing IoT data for OptiMEAS

The customer’s request was to help to gather and utilize the data they obtained from their IoT devices more effectively.

To assist the customer, the Intelliarts team created a centralized data storage, data processing solution, and a data visualization tool along with a permission management system.

The end solution can process massive volumes of IoT data and provide the OptiMEAS team with insights facilitating the decision making. Not only it helped to utilize the value of data fully, but it also enabled our partner to provide better products and services to end-users.

Discover more about the OptiMEAS case study.

ML-powered list stacking and predictive modeling for real estate business

The customer’s request was to help use their vast big data to predict homeowners who are going to sell their property.

To assist the customer, the Intelliarts team created a complex ML solution consisting of the realtor model and the investor model. The solution was trained on a huge dataset of around 60 million data records. In the dataset, we distinguished 900+ attributes of property characteristics, 400 attributes of demographic data, and skip tracing data. The finished solution is obtaining about 200GB of raw data every month.

The model is accurate, compared to the industry average. It shows an accuracy of 71%.

As a business outcome, the delivered solution helps to reach out to the most motivated homeowners, resulting in lower marketing costs, more precise targeting, and better ROI.

Discover more about the real estate business case study.

Final Take

Effective big data collection and analysis is what helps businesses to gain insights through which they manage to make informed decisions and stay competitive. By diversifying data sources, implementing real-time analysis, building a data-driven culture, leveraging predictive analytics, and using other listed practices, companies can maximize the value of their data and drive success.

Implementation of big data collection, as well as other ML developments needed for making use of big data, requires substantial expertise and knowledge. Entrust your project to Intelliarts — an AI and ML development agency with 100+ AI and ML professionals on the team and over 24 of experience in the market delivering tailored solutions.

FAQ

1. How to organize big data?

Organizing big data involves structuring and storing data efficiently. This typically includes using data lakes or data warehouses, implementing data governance practices, and employing data management tools to ensure data is accessible, secure, and usable for analysis.

2. How do big companies collect data?

Big companies collect data through various methods, such as web scraping, social media analytics tool, IoT devices, customer transactions, and public records. They often use advanced tools and technologies to gather and process large volumes of data.

3. What are the best tools for collecting big data?

The best big data collection tools depend on the specific needs of the project. Popular options include Apache Hadoop for distributed data processing, Apache Kafka for real-time data streaming, and web scraping tools like Scrapy for extracting data from websites.