Solution Highlights

- Created the real estate predictive modeling ML solution that ranks homeowners based on their motivation to sell a house

- Increased the number of clients from 5-7 to 200+ companies over 2 years

- Reached the revenue of $5-7 million per quarter

- Achieved prediction accuracy of over 70%, which is above the industry standard

- Built a system capable of processing 8 TB of data

About the Project

Customer:

Our partner is an AI-driven real estate company (under NDA), which started as a small startup business and ended up increasing their monthly revenue by 600% in 12 months due to adopting AI and machine learning.

Challenges & Project Goals:

The customer came up with an idea of managing large datasets for real estate analytics to predict homeowners who are going to sell their property before the houses were put up for sale. With this idea in mind, the company wanted to deliver a massive competitive advantage for their end-users, real estate businesses, so the latter could focus their marketing efforts on the most motivated sellers and improve ROI.

Solution:

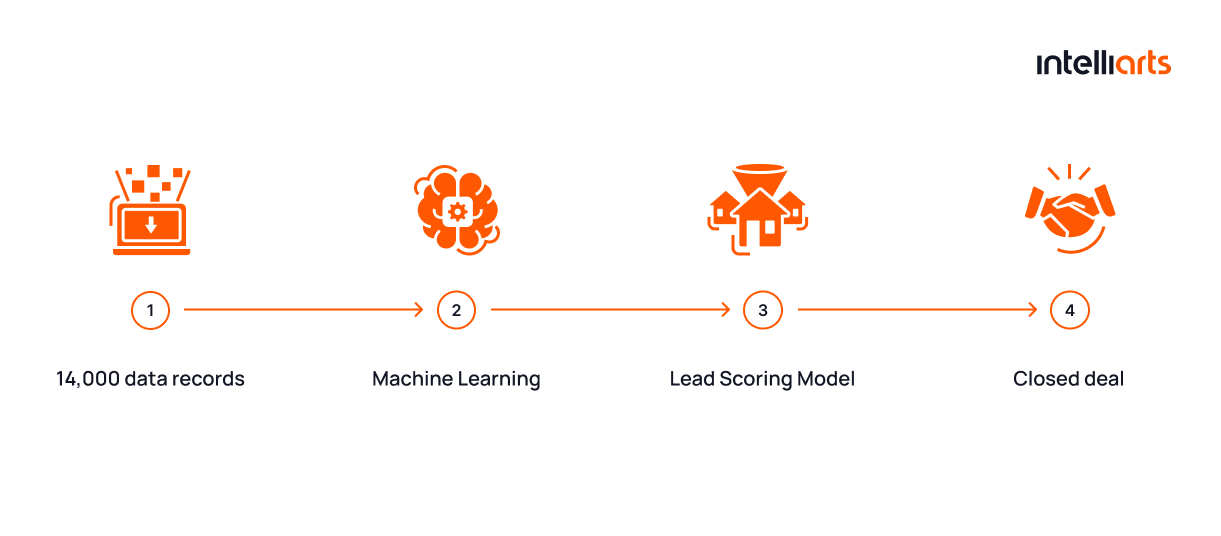

By joining our effort with the customer, our data scientists built the two types of machine learning models:

- The realtor model predicts the probability of selling a house in the next few months. So, real estate agents could contact potential sellers before the property appears on Multiple Listing Service (MLS), the world’s biggest private database for buying and selling houses.

- The investor model helps with predicting whether there could be a discount agreed on this particular property and the minimum and maximum sum of this discount.

Importantly, the models are built separately for each state where the customer operates since the volume of data and behavior insights vary significantly there.

Business Value Delivered:

- The ML-powered list stacking and predictive modeling solution provides real estate businesses with the unique opportunity to optimize their marketing effort by reaching out to the most motivated homeowners. This also means lower marketing costs, more precise targeting, and better ROI.

- The models are accurate in results. For example, the realtor model can predict 71% of the homeowners that are most likely to sell. As compared to traditional methods, this result is significantly better than the industry average.

Technology Solution

PoC Development Stage

Our cooperation with the company started with PoC development. The customer asked us to build an ML-based realtor model to check how worthy it was to develop this list-stacking solution since they had to buy a truly expensive dataset to make it work.

Intelliarts delivered the PoC in two months, including the uploading and processing of the data. For this purpose, we used a sample dataset requested directly from the customer’s vendors.

Data Processing Stage

Since the customer was fully satisfied with the results and as soon as we got approval from them, the team moved to building an ML-based list stacking and predictive modeling solution.

As mentioned, the company owned a huge dataset, which we had to process. Altogether, it was around 60 million data records and 1400 property characteristics as the customer spent $90,000 for big data only. This data could theoretically be divided into three groups:

- Approximately 900+ attributes of property characteristics. The company had all sorts of data about the houses they were tracking, including mortgage and foreclosure data, public records from the local tax assessor, price estimations, and real estate transactions.

- 400 attributes of the demographic data — this type of data included everything from income to age groups and derogatory records. We used this data to help the investor model assess how urgently the homeowner needed to sell the house and, hence, find out whether a real estate investor could expect a discount.

- Skip tracing data. In real estate, skip tracing refers to the process of finding a homeowner motivated enough to sell. The company maintained and regularly updated the database of skip tracing data.

Having this large dataset, we planned to build the advanced real estate solution for realtor and investor models. But first, our data engineers had to do data ingestion. The business started buying data a few months before the processing began. Besides, it’s worth mentioning that data is updated monthly in batches of 100-200 GB, so a certain amount of data has already been accumulated.

After data ingestion, we had to address the data processing challenge. Regarding the large volume and breadth of the data we obtained, we had to think over the way to process the data efficiently and in a timely manner. To go further in detail:

- As said, we got around 200 GB of raw data every month, which we re-uploaded to Amazon S3 data storage from the place where the data contractor uploaded it.

- Then, our data engineers completed data transformation in order to translate the data into an easily accessible format for the ML engineers by taking a medallion architecture approach. Its idea is to store data in three ways: the bronze level for raw data; silver for the data from different providers merged in one storage; and gold for the data with predictions needed for end-users.

- For data processing, we chose AWS Glue, which suited us best due to its ability to handle this amount and volume of data in the appropriate terms. Besides, our data scientists opted for AWS Aurora for user data storage since this database looked reasonable to us because of its scalability.

- Glue pipelines worked perfectly for us to upload the data to storage, process, and prepare the datasets for the following prediction. For the ML processes, we chose Apache Airflow, another useful tool for scheduling workflows effectively. This way, the team refined the data, generated features, and prepared the right dataset from the raw data received to be able to do accurate predictions.

Model Development Stage

Only then we trained the models. Both the realtor and investor products are regression models that were built based on historical data:

- For the realtor product, we fed the model with the three types of data we mentioned to get a score from 0 to 100. The higher the score is, the more likely the homeowner would sell the house. Internally, we run up to 8 different models to come up with the score the user actually saw. For example, the score could be based on the person’s data points, housing properties, etc. Hence, the ML model was built complicated, yet able to provide accurate results.

- For the investor product, we fed the model with the transaction data of similar houses and their price estimations to tell the approximate price of a new house. Comparing the transaction data to similar cases and having the data about the owner’s current state of affairs, we could also predict whether the person planned to sell the house and whether the company could expect a discount. Again, a range of variables made the model complicated, but more efficient with results.

Business Outcomes

Together with the customer, we built an ML-based list stacking and property data analytics and predictive solution that ranks homeowners from the most to least motivated to sell a house. Although Intelliarts continues to improve the solution, here is what we’ve already accomplished:

- By far, we can predict at least 70% of the homeowners who are going to sell their houses, which is much better than the industry standard.

- The ML-based solution offers a tremendous advantage to end-users, allowing real estate businesses to reduce their marketing costs by targeting the right homeowners and closing more deals.

- With new models in place, the customer benefits from better sales and the increased number of transactions in a state.

- The company grew significantly during our cooperation, and the advanced ML solution we built fueled its success. In particular, the predictive modeling solution helped the customer receive a few extra million investments.

- Now the company is going to involve a new data provider with a few thousand extra data fields and expand the business even further.

After 2 years in production, our solution proved to be very successful:

- The number of clients grew from 5-7 to 200+ businesses across the US

- The revenue was around $5-7 million per quarter, depending on the time period

- Over 2000 ML models were trained over this period, with 153 models actively running in production